Hi,

I'm using a Teensy 3.5 to sample an incoming 520Hz (~2ms/cycle) square-wave signal. I want to sample the waveform 20 times/cycle, so I set the timing delay to 95.7uSec (empirically derived number). Here is the code:

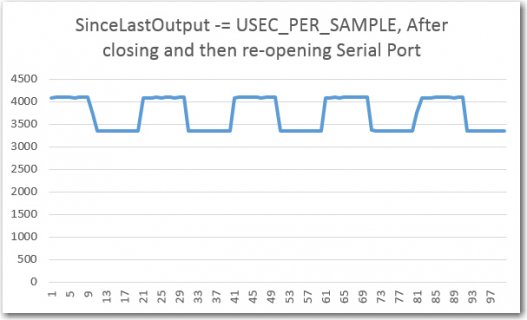

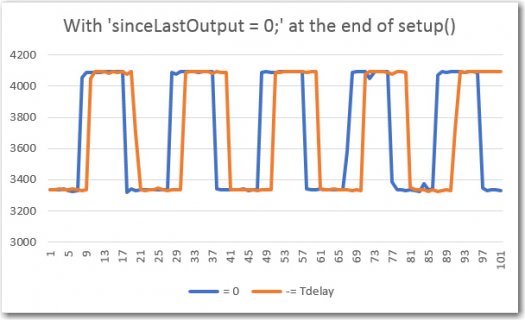

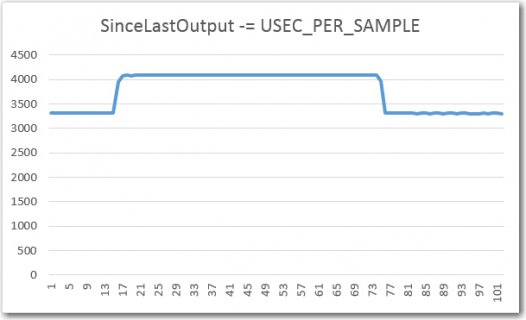

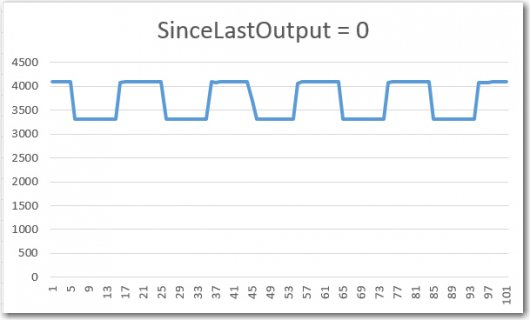

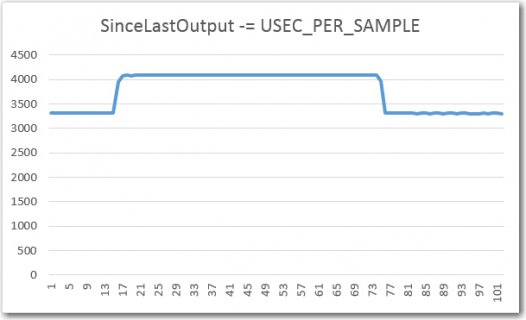

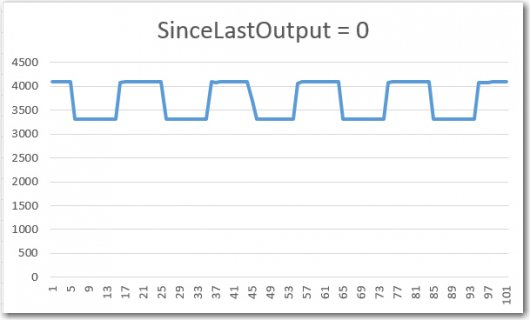

The problem I'm having is that I get two completely different results depending on whether I use the 'sinceLastOutput = 0' or sinceLastOutput -= USEC_PER_SAMPLE versions of the elapsedMicros variable adjustment technique, as shown in the following Excel sample plots. In each plot, the first 100 or so samples are shown. These plots should be almost identical, but in fact are off by a factor of about 5:1 - something that I can't explain. This is perfectly repeatable, and is driving me nuts.

Any ideas what I'm doing wrong here?

TIA,

Frank

I'm using a Teensy 3.5 to sample an incoming 520Hz (~2ms/cycle) square-wave signal. I want to sample the waveform 20 times/cycle, so I set the timing delay to 95.7uSec (empirically derived number). Here is the code:

Code:

#include <ADC.h>

ADC *adc = new ADC(); // adc object;

const int OUTPUT_PIN = 32; //lower left pin

const int IRDET1_PIN = A0; //aka pin 14

const float USEC_PER_SAMPLE = 95.7; //value that most nearly zeroes beat-note

const int SENSOR_PIN = A0;

int sample_count = 0; //this counts from 0 to 1279

elapsedMicros sinceLastOutput;

void setup()

{

Serial.begin(115200);

pinMode(OUTPUT_PIN, OUTPUT);

pinMode(IRDET1_PIN, INPUT);

digitalWrite(OUTPUT_PIN, LOW);

//decreases conversion time from ~15 to ~5uSec

adc->setConversionSpeed(ADC_CONVERSION_SPEED::HIGH_SPEED);

adc->setSamplingSpeed(ADC_SAMPLING_SPEED::HIGH_SPEED);

adc->setResolution(12);

adc->setAveraging(1);

}

void loop()

{

//this runs every 95.7uSec

if (sinceLastOutput > USEC_PER_SAMPLE)

{

sinceLastOutput = 0;

//sinceLastOutput -= USEC_PER_SAMPLE;

//start of timing pulse

digitalWrite(OUTPUT_PIN, HIGH);

// Step1: Collect a 1/4 cycle group of samples and sum them into a single value

// this section executes each USEC_PER_SAMPLE period

int samp = adc->analogRead(SENSOR_PIN);

Serial.print(sample_count); Serial.print("\t"); Serial.println(samp);

sample_count++; //checked in step 6 to see if data capture is complete

digitalWrite(OUTPUT_PIN, LOW);

}//if (sinceLastOutput > 95.7)

}//loopThe problem I'm having is that I get two completely different results depending on whether I use the 'sinceLastOutput = 0' or sinceLastOutput -= USEC_PER_SAMPLE versions of the elapsedMicros variable adjustment technique, as shown in the following Excel sample plots. In each plot, the first 100 or so samples are shown. These plots should be almost identical, but in fact are off by a factor of about 5:1 - something that I can't explain. This is perfectly repeatable, and is driving me nuts.

Any ideas what I'm doing wrong here?

TIA,

Frank