Hey ya'll

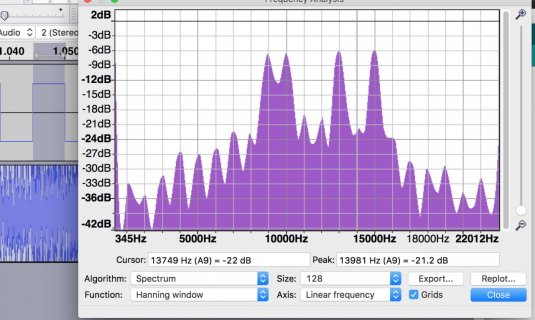

So I'm running into some problems I haven't been able to figure out. I am generating 8 sine waves, each sine representing a bit in a char. I'll change the amplitudes of each sine waveform, hold it for 5 ms, and then encode the next char. I also synth a DC waveform, this waveform's amplitude toggles between .6 and -.6 between chars. Right now I am just outputting the audio from a Teensy 3.6 via USB to my Mac. I can record the audio with Audacity. Later, when I'm happy with the signals I'll switch over to the Teensy Audio Shield.

I actually have this working... sort of. Their are some quirky things going on. For example; the DC waveform is only supposed to be on the L-channel, however is shows up on L&R. If i don't have an AudioOutputI2S object declared, there is no audio output through USB?? Adding other variables to measure the delay in micros() between encoding chars completely changes the audio signal.

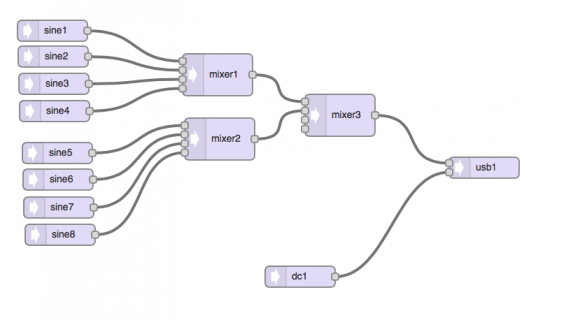

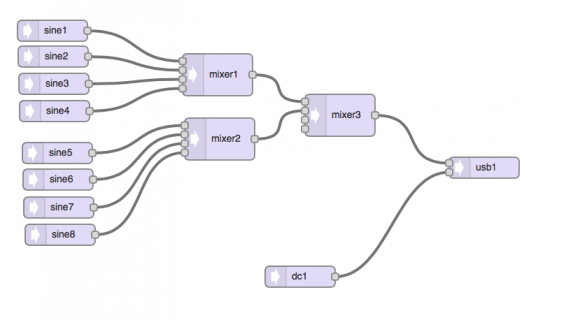

Here are my instantiations of the audio objects. The AudioOutoutI2S is declared, but never used. Nothing will output through USB if its not there.

Set things up

The unsigned long delay and time are used to measure the delay between chars, I have them commented because they were screwing things up. "hello world" is just repeated over and again, there is a 5 char delay between each repeat.

The Loop

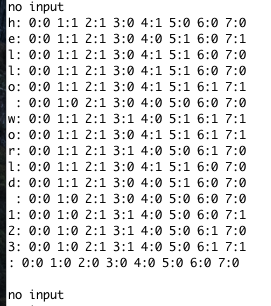

The waveform

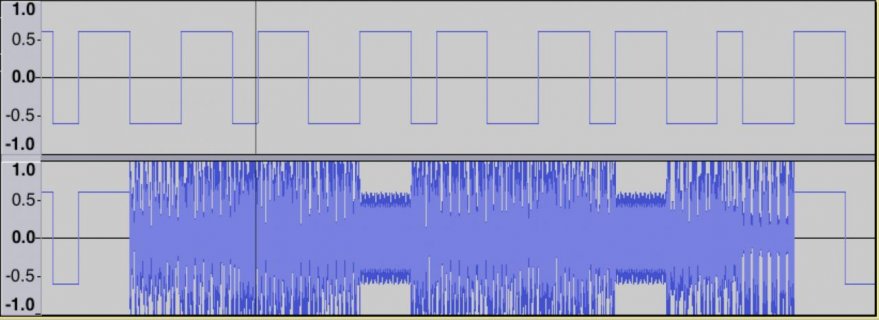

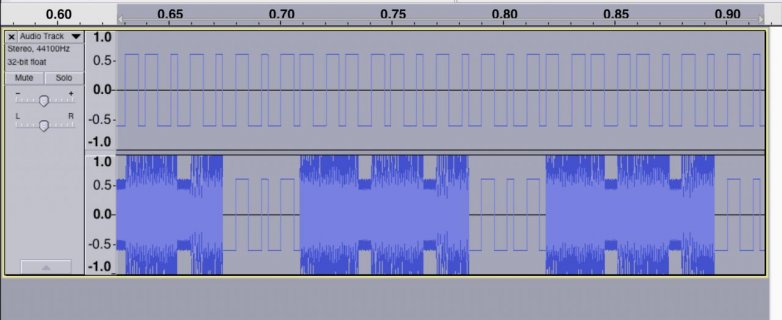

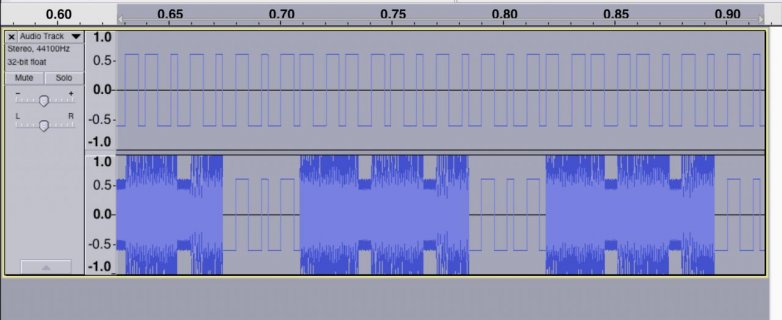

So you can see the clock is on both R&L channels and the duration of the clock isn't as good as I would like it to be. However, the clk period is consistent with the period of the data. So I can still decode it.

Subtracting out the delay between encoding each char doesn't work either. It did't fix the clk period and for whatever reason it added the clk and sin waveforms...

Any good ideas? I'm out lol. I realize I am doing something the library wasn't made to do.

Thanks for the help! I love these boards!

FYI the purpose of this is to encode data into the audio channels of recording devices.

So I'm running into some problems I haven't been able to figure out. I am generating 8 sine waves, each sine representing a bit in a char. I'll change the amplitudes of each sine waveform, hold it for 5 ms, and then encode the next char. I also synth a DC waveform, this waveform's amplitude toggles between .6 and -.6 between chars. Right now I am just outputting the audio from a Teensy 3.6 via USB to my Mac. I can record the audio with Audacity. Later, when I'm happy with the signals I'll switch over to the Teensy Audio Shield.

I actually have this working... sort of. Their are some quirky things going on. For example; the DC waveform is only supposed to be on the L-channel, however is shows up on L&R. If i don't have an AudioOutputI2S object declared, there is no audio output through USB?? Adding other variables to measure the delay in micros() between encoding chars completely changes the audio signal.

Here are my instantiations of the audio objects. The AudioOutoutI2S is declared, but never used. Nothing will output through USB if its not there.

Code:

#include <Audio.h>

#include <Wire.h>

#include <SPI.h>

#include <SD.h>

#include <SerialFlash.h>

AudioSynthWaveformSine mySin[7];

AudioSynthWaveformDc square_clk;

AudioMixer4 mixer1;

AudioMixer4 mixer2;

AudioMixer4 mixer3;

AudioOutputI2S i2s2_output;

AudioOutputUSB usb1;

AudioConnection patchCord1(mySin[0], 0, mixer1, 0);

AudioConnection patchCord2(mySin[1], 0, mixer1, 1);

AudioConnection patchCord3(mySin[2], 0, mixer1, 2);

AudioConnection patchCord4(mySin[3], 0, mixer1, 3);

AudioConnection patchCord5(mySin[4], 0, mixer2, 0);

AudioConnection patchCord6(mySin[5], 0, mixer2, 1);

AudioConnection patchCord7(mySin[6], 0, mixer2, 2);

AudioConnection patchCord8(mySin[7], 0, mixer2, 3);

AudioConnection patchCord9(mixer1, 0, mixer3, 0);

AudioConnection patchCord10(mixer2, 0, mixer3, 1);

AudioConnection patchCord11(square_clk, 0, usb1, 0);

AudioConnection patchCord12(mixer3, 0, usb1, 1);

Set things up

Code:

//unsigned long delay_u = 0;

//unsigned long time_u = 0;

char c[] = "hello world 123";

int count = 0;

int clk = 1;

int my_freq[] = {8E3, 9E3, 10E3, 11E3, 12E3, 13E3, 14E3, 15E3};

void setup() {

AudioMemory(50);

// Assign a freq and amp to each sin.

AudioNoInterrupts();

for(int i=0; i<8; i++){

mySin[i].frequency(my_freq[i]);

mySin[i].amplitude(0);

}

AudioInterrupts();

delay(1000);

}The unsigned long delay and time are used to measure the delay between chars, I have them commented because they were screwing things up. "hello world" is just repeated over and again, there is a 5 char delay between each repeat.

The Loop

Code:

void loop() {

//time_u = micros();

int len = sizeof(c);

if(count < len+5){

count++;

}else {

count = 0;

}

if( count < len ){

char key = c[count];

AudioNoInterrupts();

// Toggle Clock

if(clk == 1){

clk = 0;

square_clk.amplitude(-.6);

} else {

clk = 1;

square_clk.amplitude(.6);

}

// Toggle Sins

for (int i = 0; i<8; i++){

if(bitRead(key, 7-i) == 1){

mySin[i].amplitude(.6);

} else {

mySin[i].amplitude(0);

} // end else if

} // end for bit read

AudioInterrupts();

}

if(count >= len){

// No Data

AudioNoInterrupts();

if(clk == 1){

clk = 0;

square_clk.amplitude(-.6);

} else {

clk = 1;

square_clk.amplitude(.6);

}

for(int i=0;i<8;i++){

mySin[i].amplitude(0);

} //end for

AudioInterrupts();

}

//delay_u = micros() - time_u;

//delayMicroseconds(5E3-dealy_u)

delay(5);

} // end loopThe waveform

So you can see the clock is on both R&L channels and the duration of the clock isn't as good as I would like it to be. However, the clk period is consistent with the period of the data. So I can still decode it.

Subtracting out the delay between encoding each char doesn't work either. It did't fix the clk period and for whatever reason it added the clk and sin waveforms...

Any good ideas? I'm out lol. I realize I am doing something the library wasn't made to do.

Thanks for the help! I love these boards!

FYI the purpose of this is to encode data into the audio channels of recording devices.