Wow, that's a good one. My first guess would be errors in the FFT get passed back through the iFFT. I'd use a good signal generator and feed the FFT with various sine waves, examining the FFT output for anything not at the frequency of your incoming sine wave. The filter windows might help. I'd use the opposite approach on the inverse FFT side, feed it numbers for one frequency band at a time, and with an oscilloscope see how nice the sine waves are that come out. Kind of brute force, there may be better ideas.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Fast Convolution Filtering with Teensy 4.0 and audio board

- Thread starter DD4WH

- Start date

- Status

- Not open for further replies.

I implemented the convolution, it works but a noise appears with the output signal...may you help me to understand how to cancel this noise ?

Thanks and best regards

Hard to guess if you don't follow the forum rule and post your code... What kind of noise? Do you have any

data/graph/spectra to show?

Been turning out a single-channel version, uses about ~16 bytes per tap as a consequence, example includes a 1920-tap

filter which runs with 3.8% CPU on T4 @600MHz, and 38k or so memory.

Files of interest: filter_unipart.h, filter_unipart.cpp, examples/Synthesis/UniformlyPartitionedFilter/UniformlyPartitionedFilter.ino

branch: https://github.com/MarkTillotson/Audio/tree/uniformly_partitioned_convolve

I tried to keep the code as concise as possible and make use of all the CMSIS calls I could. Uses _real_ float32 FFTs and only

stores the non-negative frequency bins internally to halve memory use and speed things up.

filter_unipart.h:

filter_unipart.cpp:

filter which runs with 3.8% CPU on T4 @600MHz, and 38k or so memory.

Files of interest: filter_unipart.h, filter_unipart.cpp, examples/Synthesis/UniformlyPartitionedFilter/UniformlyPartitionedFilter.ino

branch: https://github.com/MarkTillotson/Audio/tree/uniformly_partitioned_convolve

I tried to keep the code as concise as possible and make use of all the CMSIS calls I could. Uses _real_ float32 FFTs and only

stores the non-negative frequency bins internally to halve memory use and speed things up.

filter_unipart.h:

Code:

#ifndef filter_unipart_h_

#define filter_unipart_h_

#include "Arduino.h"

#include "AudioStream.h"

#include "arm_math.h"

class AudioFilterUnipart : public AudioStream

{

public:

AudioFilterUnipart(void) : AudioStream(1, inputQueueArray)

{

const int L = AUDIO_BLOCK_SAMPLES ; // less cumbersome name

const int N = 2*L ; // size of FFT, two audio blocks

arm_rfft_fast_init_f32 (&fft_instance, N) ;

prev_blk = NULL ;

filt_spectra = NULL ;

delay_line = NULL ;

Npart = -1 ; // signals no valid filter

active = false ;

}

virtual void update (void);

// Set the filter from FIR coefficients, makes active - unless coeffs == NULL, which stops and releases resources

void setFIRCoefficients (int size, float * coeffs) ;

void pause (void) ;

void resume (void) ;

int partitionCount (void) ;

int heapUsage (void) ;

private:

audio_block_t * inputQueueArray[1];

arm_rfft_fast_instance_f32 fft_instance ;

float in_array [2 * AUDIO_BLOCK_SAMPLES] ; // float32 input samples

float out_spectra [2 * 2*AUDIO_BLOCK_SAMPLES] ; // complex32 accumulator for spectra

float out_array [2 * AUDIO_BLOCK_SAMPLES] ; // float32 output samples

float * filt_spectra ; // the partitioned spectra, allocated dynamically

float * delay_line ; // successive FFTs go in here, allocated dynamically

audio_block_t * prev_blk ;

int state ; // indexes the delay line and is -1 to stop everything

volatile int Npart ; // number of partitions, each of size L

volatile bool active ; // number of partitions, each of size L

} ;

#endiffilter_unipart.cpp:

Code:

#include "filter_unipart.h"

#include "utility/dspinst.h"

#if defined(__ARM_ARCH_7EM__)

// reconstruct negative frequency bins, and nyquist bin (set to zero)

// this is used to reduce workload and workspace as only real data is assumed

inline void reconstruct_neg_freqs (float * spect, int Npoints)

{

int i = 2 ;

int j = 2 * Npoints - 2 ;

while (j > i)

{

spect[j] = spect[i++];

spect[j+1] = -spect[i++];

j -= 2 ;

}

spect[j] = 0.0 ;

spect[j+1] = 0.0 ;

}

inline void mul_acc_spectrum (float * filt_spectrum,

float * in_spectrum,

float * out_spectrum,

int Nbins)

{

float out_temp [2*Nbins] ; // need temporary storage as CMSIS lacks mulAcc for complex.

arm_cmplx_mult_cmplx_f32 (filt_spectrum, in_spectrum, out_temp, Nbins) ; // multiply...

arm_add_f32 (out_temp, out_spectrum, out_spectrum, 2*Nbins) ; // ...accumulate

}

// modular inc/dec that works for negative inc. C % operator is non-mathematical alas.

inline void inc_mod (int & a, int inc, int b)

{

a = (a + inc + b) % b ;

}

void AudioFilterUnipart::update (void)

{

if (!active)

{

audio_block_t * block = receiveWritable();

if (block != NULL)

release (block) ;

return ;

}

const int L = AUDIO_BLOCK_SAMPLES ; // processing chunk size

const int N = 2*L ; // FFT size, two chunks, overlap-save style.

audio_block_t * block = receiveWritable(); // passed down via prev_blk to out_blk, so not readOnly

audio_block_t * out_blk = NULL ;

// the in_array gets the previous and current blocks samples (FFT covers 2 blocks) and we have

// to handle lack of blocks as if were zero:

if (prev_blk == NULL)

{

arm_fill_f32 (0.0, in_array, L) ;

out_blk = allocate () ;

}

else

{

arm_q15_to_float (prev_blk->data, in_array, L) ;

out_blk = prev_blk ; // reuse the previous block for output

}

if (block == NULL)

arm_fill_f32 (0.0, in_array + L, L) ;

else

arm_q15_to_float (block->data, in_array + L, L) ;

prev_blk = block ;

// write latest spectrum to relevant slot in the delay_line

arm_rfft_fast_f32 (&fft_instance, in_array, delay_line + state * 2*L, 0) ;

inc_mod (state, 1, Npart+1) ; // state indexes delay_line,

// the rest is output processing only, doesn't affect future state:

if (out_blk != NULL) // if starved of blocks, don't bother doing the output stuff

{

arm_fill_f32 (0.0, out_spectra, 2*L) ; // zero the output accumulator (DC / positive bins only)

int del_index = state ;

for (int i = 0 ; i < Npart ; i++) // loop through all the filter partitions

{

inc_mod (del_index, -1, Npart+1) ; // delayline index goes backwards (it is a convolution, not correlation!)

// multiply each delayline spectrum by relevant filter spectrum, accumulate in out_spectra (DC / positive bins only)

mul_acc_spectrum (filt_spectra + i * 2*L,

delay_line + del_index * 2*L,

out_spectra,

L) ;

}

reconstruct_neg_freqs (out_spectra, N) ; // needed only once before IFFT

arm_rfft_fast_f32 (&fft_instance, out_spectra, out_array, 1) ; // 1 means inverse FFT

arm_float_to_q15 (out_array + L, out_blk->data, L) ; // just need second half of samples (overlap-save)

transmit (out_blk) ;

release (out_blk) ;

}

}

void AudioFilterUnipart::setFIRCoefficients (int size, float * coeffs)

{

const int L = AUDIO_BLOCK_SAMPLES ; // less cumbersome

__disable_irq() ; // turn off update() before tinkering

Npart = -1 ;

active = false ;

__enable_irq() ;

// tidy previous state

if (filt_spectra != NULL) delete[] filt_spectra ;

if (delay_line != NULL) delete[] delay_line ;

filt_spectra = NULL ;

delay_line = NULL ;

if (size == 0 || coeffs == NULL) // shutdown and release resources if no new filter

return ;

int number_parts = (size + L-1) / L ; // setup state for update() loop

filt_spectra = new float [(number_parts + 1) * 2*L] ; // entries overlap by L complex values, need extra L complex at end

if (filt_spectra == NULL)

return ;

delay_line = new float [(number_parts + 2) * 2*L] ; // entries overlap by L complex values, and we also wrap, need two extra L at end

if (delay_line == NULL)

{

delete[] filt_spectra ;

filt_spectra = NULL ;

return ;

}

//Serial.print ("Npart ") ; Serial.println (number_parts) ;

//int cmults = 2 * 8 * 256/2 + number_parts * L;

//Serial.print (1.0*cmults/L) ; Serial.println (" complex muls per sample") ;

int index = 0 ;

for (int i = 0 ; i < number_parts ; i++)

{

arm_copy_f32 (coeffs + index, in_array, L) ;

if (index+L > size)

arm_fill_f32 (0.0, in_array + (size-index), index+2*L-size) ; // last partition may be shorter

else

arm_fill_f32 (0.0, in_array + L, L) ;

arm_rfft_fast_f32 (&fft_instance, in_array, filt_spectra + 2*index, 0) ; // store filt spectrum

index += L ;

}

arm_fill_f32 (0.0, delay_line, (number_parts+1) * 2*L) ; // zero out delay line for clean startup

__disable_irq() ;

state = 0 ;

Npart = number_parts ;

active = true ; // enable the update() method to start processing

__enable_irq() ;

}

void AudioFilterUnipart::pause (void)

{

__disable_irq ();

active = false ;

__enable_irq ();

}

void AudioFilterUnipart::resume (void)

{

__disable_irq ();

if (Npart > 0) // can only resume if a filter has been defined

active = true ;

__enable_irq ();

}

int AudioFilterUnipart::partitionCount (void)

{

return Npart ;

}

int AudioFilterUnipart::heapUsage (void)

{

const int L = AUDIO_BLOCK_SAMPLES ; // processing chunk size

return 4 * ((Npart + 1) * 2*L + (Npart + 2) * 2*L) ; //bytes used by filt_spectra and delay_line

}

#elif defined(KINETISL)

void AudioFilterUnipart::update (void)

{

audio_block_t * block = receiveReadOnly();

if (block != NULL)

release (block) ;

}

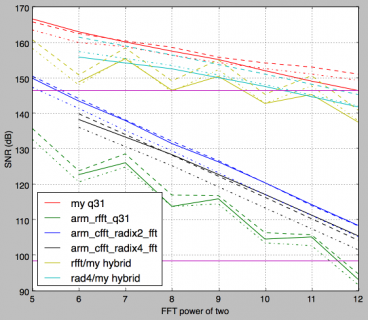

#endifI've been investigating the performance of the arm q31 fixed point FFT CMSIS routines, in particular measuring

the performance of taking a waveform, pushing it through the FFT and then the relevant inverse FFT and comparing

to the original signal. This is clearly of interest for fast convolution code for two reasons - some processors have

slower floating point than fixed point, and 32 bit fixed point promises a better signal/noise ratio than single floats

for the same memory footprint.

It turns out that the arm routines for both forward and inverse FFT divide by N (where N is the size of the FFT).

For the forward direction this is understandable, in order to prevent overflow in intermediate and final results

for fixed point - this allows an arbitrary input waveform in the range -1 to +1 (Q31 is fixed point 1.31 format),

yet yields spectral components with a maximum magnitude of 1.

However for the inverse mapping this is usually a mistake, and it throws away P bits of precision where N=2^P.

And in particular IFFT(FFT(x)) = x/N

Normally standard semantics have IFFT(FFT(x)) = Nx, and you have to chose when to divide out the N, but

the q31 routines divide by N in both directions without giving you the option to prevent it.

For spectral analysis you'd divide by N in the forward direction. For any mapping like convolution where you

apply the inverse FFT to a spectrum it doesn't really matter when you divide by N, so long as you do it once!

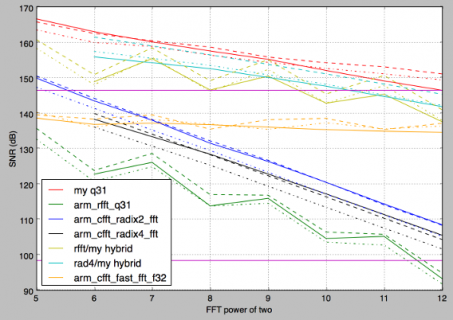

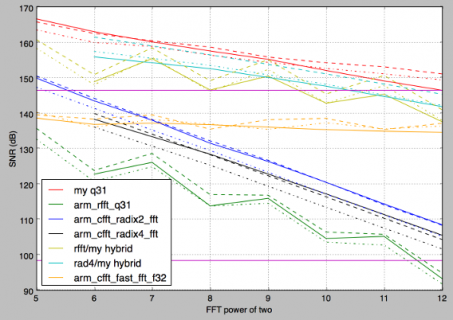

So here's a quick summary of the performance of doing FFT->IFFT for the arm_rfft_q31, arm_cfft_radix2_q31,

arm_cfft_radix4_q31, together with my FFT coded to do divide-by-N only in the forward direction, and some

hybrid schemes using my invertse FFT after one of the arm forward FFTs:

The solid lines represent a random signal, the dashed lines a single sinusoid, and the dash-dot lines are a two-tone

sinusoidal signal. The variation between them is interesting, I've not thought about why, but it gives some idea of

the variation in the data.

The two magenta lines represent the SNR of 24 and 16 bits. The 6dB drop as the FFT size doubles clearly shows

for the arm routines, together with a smaller rate of drop for my routine and the hybrid cases. Also of note is the

oscillating behaviour of the SNR for the rfft_q31 version - the real fft as I understand it uses the standard trick

of packing 2N samples into N complex numbers allowing the actual FFT to be of half the size it would otherwise be,

I suspect its choosing the radix4 fft when it can (faster), the radix2 otherwise, leading to the oscillating SNR performance.

A conclusion is that despite the 32 bit fixed point representation the q31 CMSIS routines aren't giving much/any better

precision than the single float routines for this style of FFT -> IFFT processing, and that this is fixable by using an

implementation of inverse FFT which doesn't throw away bits.

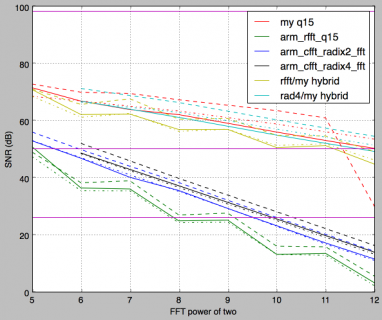

Another conclusion is that the situation for the q15 inverse FFT is _much_ worse, and its basically unfit for most use-cases,

as for instance a 1024 point q15 inverse FFT is effectively somewhat less than 6 bit precision!

I am thinking of creating a little FFT library to provide better fixed-point FFT support to address these issues.

If anyone wants a preview of the code let me know...

the performance of taking a waveform, pushing it through the FFT and then the relevant inverse FFT and comparing

to the original signal. This is clearly of interest for fast convolution code for two reasons - some processors have

slower floating point than fixed point, and 32 bit fixed point promises a better signal/noise ratio than single floats

for the same memory footprint.

It turns out that the arm routines for both forward and inverse FFT divide by N (where N is the size of the FFT).

For the forward direction this is understandable, in order to prevent overflow in intermediate and final results

for fixed point - this allows an arbitrary input waveform in the range -1 to +1 (Q31 is fixed point 1.31 format),

yet yields spectral components with a maximum magnitude of 1.

However for the inverse mapping this is usually a mistake, and it throws away P bits of precision where N=2^P.

And in particular IFFT(FFT(x)) = x/N

Normally standard semantics have IFFT(FFT(x)) = Nx, and you have to chose when to divide out the N, but

the q31 routines divide by N in both directions without giving you the option to prevent it.

For spectral analysis you'd divide by N in the forward direction. For any mapping like convolution where you

apply the inverse FFT to a spectrum it doesn't really matter when you divide by N, so long as you do it once!

So here's a quick summary of the performance of doing FFT->IFFT for the arm_rfft_q31, arm_cfft_radix2_q31,

arm_cfft_radix4_q31, together with my FFT coded to do divide-by-N only in the forward direction, and some

hybrid schemes using my invertse FFT after one of the arm forward FFTs:

The solid lines represent a random signal, the dashed lines a single sinusoid, and the dash-dot lines are a two-tone

sinusoidal signal. The variation between them is interesting, I've not thought about why, but it gives some idea of

the variation in the data.

The two magenta lines represent the SNR of 24 and 16 bits. The 6dB drop as the FFT size doubles clearly shows

for the arm routines, together with a smaller rate of drop for my routine and the hybrid cases. Also of note is the

oscillating behaviour of the SNR for the rfft_q31 version - the real fft as I understand it uses the standard trick

of packing 2N samples into N complex numbers allowing the actual FFT to be of half the size it would otherwise be,

I suspect its choosing the radix4 fft when it can (faster), the radix2 otherwise, leading to the oscillating SNR performance.

A conclusion is that despite the 32 bit fixed point representation the q31 CMSIS routines aren't giving much/any better

precision than the single float routines for this style of FFT -> IFFT processing, and that this is fixable by using an

implementation of inverse FFT which doesn't throw away bits.

Another conclusion is that the situation for the q15 inverse FFT is _much_ worse, and its basically unfit for most use-cases,

as for instance a 1024 point q15 inverse FFT is effectively somewhat less than 6 bit precision!

I am thinking of creating a little FFT library to provide better fixed-point FFT support to address these issues.

If anyone wants a preview of the code let me know...

And here's the graph for q15 versions - the magenta lines are 16 bit, 8 bit and 4 bit SNR's respectively - basically its

poor just in the forward direction, the round trip is pretty much beyond use, the nadir being a 2dB SNR for rfft_q15!

The anomoly on the red dashed curve is probably an overflow, I probably set the signal level a tad too high.

poor just in the forward direction, the round trip is pretty much beyond use, the nadir being a 2dB SNR for rfft_q15!

The anomoly on the red dashed curve is probably an overflow, I probably set the signal level a tad too high.

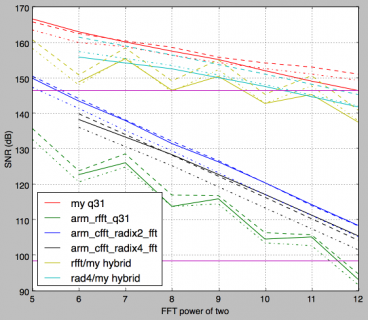

I've updated the q31 graph to include the single float performance (in orange):

The floating point FFT/IFFT holds up very well, being less sensitive to larger FFT sizes and about 22 bits

equivalent across the board. The magenta lines represent the theoretical 24 and 16 bit SNR's

There's an error in the legend, the float routine used is arm_rfft_fast_f32, not the cfft... Its also the

fastest on T4.

The floating point FFT/IFFT holds up very well, being less sensitive to larger FFT sizes and about 22 bits

equivalent across the board. The magenta lines represent the theoretical 24 and 16 bit SNR's

There's an error in the legend, the float routine used is arm_rfft_fast_f32, not the cfft... Its also the

fastest on T4.

Sorry for delay...you are right..., I'm using the "partitioned convolution code" to convolve an IR file with an audio signal and the HW is composed by the Teensy4.0 + Audioshield.

It's a white noise, always present under the audio signal; after some attempts to understand from where noise comes I suspect it is generated by the codec, no way to reduce it...

Any suggestions ?

Then I'm thinking to replace the Audioshield and try a WM8960 codec (pi hat), is there a "convolution code" (or some suggestions) using this type of codec, working at 24 bit resolution ?

Thanks

It's a white noise, always present under the audio signal; after some attempts to understand from where noise comes I suspect it is generated by the codec, no way to reduce it...

Any suggestions ?

Then I'm thinking to replace the Audioshield and try a WM8960 codec (pi hat), is there a "convolution code" (or some suggestions) using this type of codec, working at 24 bit resolution ?

Thanks

Sorry for delay...you are right..., I'm using the "partitioned convolution code" to convolve an IR file with an audio signal and the HW is composed by the Teensy4.0 + Audioshield.

It's a white noise, always present under the audio signal; after some attempts to understand from where noise comes I suspect it is generated by the codec, no way to reduce it...

Any suggestions ?

Then I'm thinking to replace the Audioshield and try a WM8960 codec (pi hat), is there a "convolution code" (or some suggestions) using this type of codec, working at 24 bit resolution ?

Thanks

It's a white noise, always present under the audio signal; after some attempts to understand from where noise comes I suspect it is generated by the codec, no way to reduce it...

Any suggestions ?

Then I'm thinking to replace the Audioshield and try a WM8960 codec (pi hat), is there a "convolution code" (or some suggestions) using this type of codec, working at 24 bit resolution ?

Thanks

Only to be clear, CMSIS does not divide by N to normalize the FFT/IFFT but to avoid overflow especially in 16 bit FFTNormally standard semantics have IFFT(FFT(x)) = Nx, and you have to chose when to divide out the N, but

the q31 routines divide by N in both directions without giving you the option to prevent it.

For spectral analysis you'd divide by N in the forward direction. For any mapping like convolution where you

apply the inverse FFT to a spectrum it doesn't really matter when you divide by N, so long as you do it once!

So in order to get proper results one could multiply after FFT and IFFT data with sqrt(N) (multiply by 32 for 1024 point FFT)

This does, however, not improve the quality of the 16-bit processing, here IFFT(FFT(x))

Nevertheless, the graphs are interesting also it does not show the improvements due to radix-8

Typically, a mixed radix FFT follows the radix-8, radix-4 and randix-2 preference list. 512-point is 3x radix8, 1024 point is radix2 followed by 3 radix-8

AFAIK, radix-8 is implemented in CMIS

I don't think the CMSIS routines have radix8 option - you would see a regular variation every 3 bits

if so I suggest. Radix8 might have issues with running out of registers or instruction cache even,

or simply be a case of diminishing returns - radix4 has about 50% of the memory traffic of radix2

which is a big win - the gain in actual FPU operations is smaller.

every sample is distributed roughly the same, being dependent on the signal amplitude. In the reverse direction the data is sparse, only

a few data points being anything other than the noise floor - for typical uses. The CMS routines shift right one bit per stage (radix2) and

two bits per stage (radix4) to prevent overflow in the forward direction. The inverse operations also do this as its the same code apart

from the conjugate twiddle factors being used.

Note that the version of CMSIS used for the Teensy is not the most recent - which is why arm_cfft_radixX_q31/q15 routines are being

used at all (they are superseded by arm_cfft_q31/q15 in later versions)

if so I suggest. Radix8 might have issues with running out of registers or instruction cache even,

or simply be a case of diminishing returns - radix4 has about 50% of the memory traffic of radix2

which is a big win - the gain in actual FPU operations is smaller.

Well, not for q15 or q31, the bits have already been thrown away. In the forward direction the incoming data is expected to be dense,So in order to get proper results one could multiply after FFT and IFFT data with sqrt(N) (multiply by 32 for 1024 point FFT)

every sample is distributed roughly the same, being dependent on the signal amplitude. In the reverse direction the data is sparse, only

a few data points being anything other than the noise floor - for typical uses. The CMS routines shift right one bit per stage (radix2) and

two bits per stage (radix4) to prevent overflow in the forward direction. The inverse operations also do this as its the same code apart

from the conjugate twiddle factors being used.

Note that the version of CMSIS used for the Teensy is not the most recent - which is why arm_cfft_radixX_q31/q15 routines are being

used at all (they are superseded by arm_cfft_q31/q15 in later versions)

Well, not for q15 or q31, the bits have already been thrown away.

That is why roundtrip is so poor, right?

Anyhow, I'm using T4 with arm_cfft_f32.c which uses radix8, to avoid the 16 bit CMSIS work arounds.

I know you know it but for others reading this thread:

IFFT and FFT are exactly the same function except input and results in IFFT are complex conjugated and results are normalized by N

as seen from

Code:

/**

@brief Processing function for the floating-point complex FFT.

@param[in] S points to an instance of the floating-point CFFT structure

@param[in,out] p1 points to the complex data buffer of size <code>2*fftLen</code>. Processing occurs in-place

@param[in] ifftFlag flag that selects transform direction

- value = 0: forward transform

- value = 1: inverse transform

@param[in] bitReverseFlag flag that enables / disables bit reversal of output

- value = 0: disables bit reversal of output

- value = 1: enables bit reversal of output

@return none

*/

void arm_cfft_f32(

const arm_cfft_instance_f32 * S,

float32_t * p1,

uint8_t ifftFlag,

uint8_t bitReverseFlag)

{

uint32_t L = S->fftLen, l;

float32_t invL, * pSrc;

if (ifftFlag == 1U)

{

/* Conjugate input data */

pSrc = p1 + 1;

for (l = 0; l < L; l++)

{

*pSrc = -*pSrc;

pSrc += 2;

}

}

switch (L)

{

case 16:

case 128:

case 1024:

arm_cfft_radix8by2_f32 ( (arm_cfft_instance_f32 *) S, p1);

break;

case 32:

case 256:

case 2048:

arm_cfft_radix8by4_f32 ( (arm_cfft_instance_f32 *) S, p1);

break;

case 64:

case 512:

case 4096:

arm_radix8_butterfly_f32 ( p1, L, (float32_t *) S->pTwiddle, 1);

break;

}

if ( bitReverseFlag )

arm_bitreversal_32 ((uint32_t*) p1, S->bitRevLength, S->pBitRevTable);

if (ifftFlag == 1U)

{

invL = 1.0f / (float32_t)L;

/* Conjugate and scale output data */

pSrc = p1;

for (l= 0; l < L; l++)

{

*pSrc++ *= invL ;

*pSrc = -(*pSrc) * invL;

pSrc++;

}

}

}Ah if the float version is using radix8 that explains the very shallow slope of the SNR graph, as the noise should increase by only 1/6th of a bit

per stage theoretically. There are 32 single-float registers which is enough for radix8 without spilling - this would not be the case for integer

registers I believe (nor double-float).

per stage theoretically. There are 32 single-float registers which is enough for radix8 without spilling - this would not be the case for integer

registers I believe (nor double-float).

- Status

- Not open for further replies.