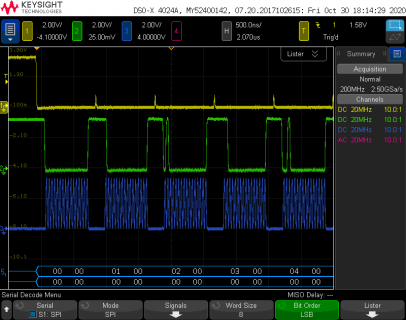

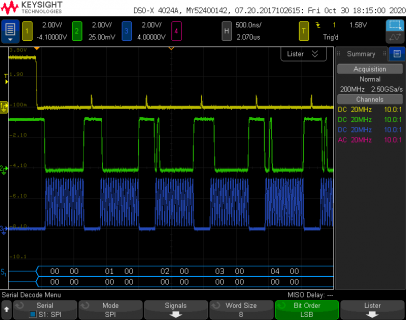

Thanks for your quick replies. To clarify, when I said choke I meant that nothing would be output on SPI1 (CLK or DATA), either on my logic analyzer or when I probed the points with analog probes. Here is a picture of my setup working with the analyzer plugged in.

I tried running my tests again this morning after reading your emails, and everything worked, I really don't know what could have changed. Is there any way I could have broken something that could have persisted across code uploads (but fixed itself when I power cycled over night)? I was messing with writing directly to registers.

The code I uploaded yesterday was a minimal test program, but ultimately I want to write directly to the DACs as fast as possible (while still being able to toggle a sync). See below for a bigger snippet of code, I basically just cannibalized the transfer16 function. I'm also considering using SPI2 to control a 3rd DAC, for full X Y and Z control of a vector monitor. Or I might piggyback Z onto the X or Y DAC, because most of the time I need to delay for a bit when turning the beam off or on anyway. (and delay means leaving the dac values unchanged, since the stream of data going to the dac is effectively drawing the scene)

Code:

#include "SPI.h"

#define SPI_TCR 0x60

#define SPI_TDR 0x64

#define SPI_RSR 0x70

#define SPI_RDR 0x74

#define SPI0ADDR(x) (*(volatile unsigned long *)(0x403A0000 + x))

#define SPI1ADDR(x) (*(volatile unsigned long *)(0x4039C000 + x))

#define PAYLOAD 4096

uint16_t dac[PAYLOAD];

#define DACX_SYNC_PIN 2

#define DACY_SYNC_PIN 3

void setup()

{

// set the slaveSelectPin as an output:

pinMode(DACX_SYNC_PIN, OUTPUT);

pinMode(DACY_SYNC_PIN, OUTPUT);

// initialize SPI:

SPI.begin();

SPI.beginTransaction(SPISettings(32000000, LSBFIRST, SPI_MODE0));

SPI1.begin();

SPI1.beginTransaction(SPISettings(32000000, LSBFIRST, SPI_MODE0));

for (int i = 0; i < PAYLOAD; i++)

{

dac[i] = i % 0xFFFF;

}

SPI0ADDR(SPI_TCR) = (SPI0ADDR(SPI_TCR) & 0xfffff000) | LPSPI_TCR_FRAMESZ(15); // turn on 16 bit mode

SPI1ADDR(SPI_TCR) = (SPI1ADDR(SPI_TCR) & 0xfffff000) | LPSPI_TCR_FRAMESZ(15); // turn on 16 bit mode

}

void loop()

{

digitalWriteFast(DACX_SYNC_PIN, LOW);

digitalWriteFast(DACY_SYNC_PIN, LOW);

int temp;

for (int i = 0; i < PAYLOAD; i++)

{

SPI0ADDR(SPI_TDR) = dac[i]; // output x

digitalWriteFast(DACX_SYNC_PIN, LOW); // this will go low before x transmission starts

SPI1ADDR(SPI_TDR) = dac[i]; // output y

digitalWriteFast(DACY_SYNC_PIN, LOW); // this will go low before y transmission starts

delayNanoseconds(510);

temp = SPI0ADDR(SPI_RDR);

temp = SPI1ADDR(SPI_RDR);

digitalWriteFast(DACX_SYNC_PIN, HIGH);

digitalWriteFast(DACY_SYNC_PIN, HIGH);

}

digitalWriteFast(DACX_SYNC_PIN, HIGH);

digitalWriteFast(DACY_SYNC_PIN, HIGH);

delay(10);

}

I'm basically aiming to make an improved VST board:

https://github.com/osresearch/vst, the old teensy 3 did not have enough memory to buffer a full frame of data, so it had to render the scene realtime, filling a spi buffer that was DMA'ed out in the background to the Dacs, but this would often cause sparkling or bright spots as the rendering speed changed. From what I can tell, the teensy 4 should have no problem buffering an entire scene in advance and spamming it out at a fixed rate, no DMA needed.

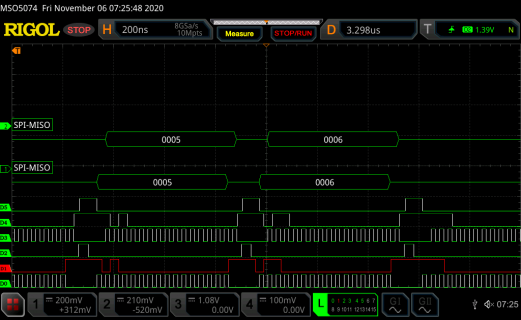

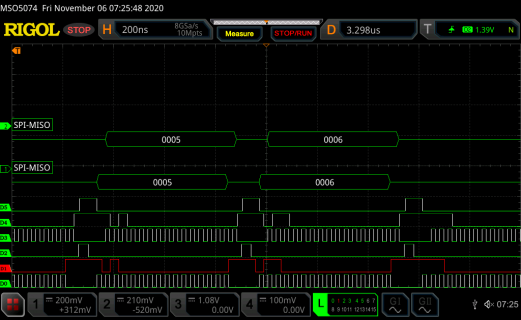

Here is a teensy 3 running the old VST program:

(XY works a lot better on the older scope)

Thanks again for your suggestions, I should have waited a day to post my question.