Hello,

I'm curious of the feasibility and interest of controlling the Teensy Audio Library with Open Sound Control and the feasibility of implementing OSC as a baked in function of the Teensy Audio Library. I'm considering work on this, and want to know if anyone else had any useful input.

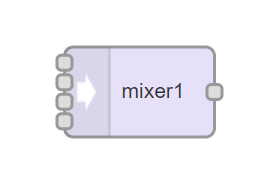

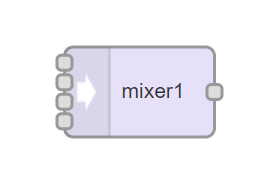

The goal is simple. For each audio object, write a helper object that automagically creates an OSC control address. For example, the "Mixer" in the audio library.

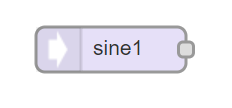

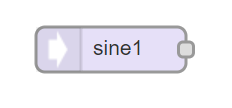

That object has a name "Mixer1" and has a public function for "Gain" with options for channel and level. What if this proposed solution would automatically create an OSC address of /mixer1/ch1/gain and /mixer1/ch2/level? Or as another example, the Sine function could be as follows.

OSC address:

If this were done on for each audio object, using a library, wouldn't that make creating a custom device with the Teensy Audio Library more easily accomplished? I haven't yet implemented OSC in any of my projects, but I did for MIDI. Unfortunately MIDI doesn't seem like the ideal way to communicate with the Audio Library because I had to write if statements for each MIDI channel I wanted to work with.

This example is for MIDI and how I monitor two sliders on my MIDI interface designed with TouchMIDI.

This code was a bit tedious to write and understand, and it required the creation of a MIDI mapping table somewhere to keep track of all the controls. The HTML of TouchMIDI has corresponding code as such.

The reason this is better in OSC is because of the way OSC addresses work. We already have a name for each audio object, and we know what functions each object expects and accepts. So when going to create your controller elsewhere you have a clear understanding of what address the control you are seeking is located. So it seems to me that this would be a really nifty and feasible solution.

If the OSC addresses were automatically mapped out, this would make the OSC control happen pretty much automatically. It would standardize controlling the library with OSC, if you will. Right now I don't know of any examples that actually show how to control the Teensy with OSC. Does anyone know of any shared projects that show a full example, and maybe a corresponding control surface written for the desktop or browser? The CNMAT/OSC has some examples to send and receive control, but it's a little foggy for me to truly grasp.

Does anyone know how I would go about creating something like this? I envision a separate library (hopefully) so we didn't need to push into the audio library directly (creating more work and overhead on the library itself).

Any thoughts? Any interest?

Jay

I'm curious of the feasibility and interest of controlling the Teensy Audio Library with Open Sound Control and the feasibility of implementing OSC as a baked in function of the Teensy Audio Library. I'm considering work on this, and want to know if anyone else had any useful input.

The goal is simple. For each audio object, write a helper object that automagically creates an OSC control address. For example, the "Mixer" in the audio library.

That object has a name "Mixer1" and has a public function for "Gain" with options for channel and level. What if this proposed solution would automatically create an OSC address of /mixer1/ch1/gain and /mixer1/ch2/level? Or as another example, the Sine function could be as follows.

OSC address:

- /sine1/amplitude

- /sine1/frequency

- /sine1/phase

If this were done on for each audio object, using a library, wouldn't that make creating a custom device with the Teensy Audio Library more easily accomplished? I haven't yet implemented OSC in any of my projects, but I did for MIDI. Unfortunately MIDI doesn't seem like the ideal way to communicate with the Audio Library because I had to write if statements for each MIDI channel I wanted to work with.

This example is for MIDI and how I monitor two sliders on my MIDI interface designed with TouchMIDI.

Code:

// TEENSY MIXER CONTROL GAINS

// AUX 1

if(channel == 3 && data1 == 51)

{

// Channel Volume Control

volume3 = (float)data2 / 127; // save the volume

// Calculate the total Volume

i = volume3 * (1 + gain3); Serial.println(i);

// Set the volume

mixerLeft.gain(1, i);

}

if(channel == 3 && data1 == 53)

{

// Channel Gain Control

gain3 = (float)data2 / 127; // save the gain

// Calculate the total Volume

i = volume3 * (1 + gain3); Serial.println(i);

// Set the volume

mixerLeft.gain(1, i);

}

// AUX 2

if(channel == 4 && data1 == 51)

{

// Channel Volume Control

volume4 = (float)data2 / 127; // save the volume

// Calculate the total Volume

i = volume4 * (1 + gain4); Serial.println(i);

// Set the volume

mixerRight.gain(1, i);

}

if(channel == 4 && data1 == 53)

{

// Channel Gain Control

gain4 = (float)data2 / 127; // save the gain

// Calculate the total Volume

i = volume4 * (1 + gain4); Serial.println(i);

// Set the volume

mixerRight.gain(1, i);

}This code was a bit tedious to write and understand, and it required the creation of a MIDI mapping table somewhere to keep track of all the controls. The HTML of TouchMIDI has corresponding code as such.

<!-- Aux 1 -->

<div class="column">

<div class="text" style="position: absolute">  Aux In</div>

<div class="encoder" label="Digital Gain" midicc="4, 53" colour="#FB1CF3" storeid="s1m1"></div>

<div class="slider" label="Volume" midicc="4, 51" colour="#14E528" storeid="s2m1"></div>

</div>

The reason this is better in OSC is because of the way OSC addresses work. We already have a name for each audio object, and we know what functions each object expects and accepts. So when going to create your controller elsewhere you have a clear understanding of what address the control you are seeking is located. So it seems to me that this would be a really nifty and feasible solution.

If the OSC addresses were automatically mapped out, this would make the OSC control happen pretty much automatically. It would standardize controlling the library with OSC, if you will. Right now I don't know of any examples that actually show how to control the Teensy with OSC. Does anyone know of any shared projects that show a full example, and maybe a corresponding control surface written for the desktop or browser? The CNMAT/OSC has some examples to send and receive control, but it's a little foggy for me to truly grasp.

Does anyone know how I would go about creating something like this? I envision a separate library (hopefully) so we didn't need to push into the audio library directly (creating more work and overhead on the library itself).

Any thoughts? Any interest?

Jay

Last edited by a moderator: