I am working on a sensor triggering project with a teensy and am nearing the end, but have stumbled upon some very haphazard latency in the serial communication to the computer. The code is relatively long at this point so I won't post everything but the basic gist is

Instead of listing every last piece of code, I tried to describe it as meaningfully as I thought was necessary, hopefully this is understandable.

Now this is totally capable of generating the trigger square wave with low variance along with the corresponding timestamp

The problem

The problem is there is often delay in communicating this 10 byte message to the PC. I tried to do some basic logging of the sampling time of this message (with a C++ node and std::chrono::system_clock::now()) and found that, for two serparate runs:

Note logging happened by way of

where

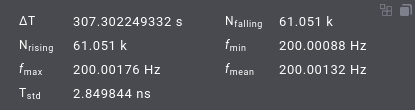

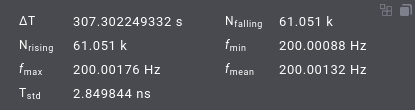

The output rate here is 200Hz, I believe there was a message which was delayed until arriving almost simultaneously with the following sampling time, and this is the reason for the max_ms being double the sampling rate and the min_ms being so small. As far as I understand, USB serial w/ teensy 4.1 isn't set by Serial.begin(9600); and is actually either 12 or 480 Mbit/sec, following USB speeds.

A 10 byte message at 12 Mbit/sec is should take 0.0067 ms = (10 × 8) ÷ (12*10^6) × 1000. So that is far and away sufficiently fast. I figure this means that the problem lies in the serial interface to my laptop, just to note I have limited experience with usb communication at a very hands-on level so please correct me if I say anything wrong.

Testing USB comm latency

I also employed a different test of the latency, I can't quite remember the source but I found this in another forum post:

For the same message size, this seems to be quite small. In all my attempts bounded by 0.5ms, so not entirely sure what the problem is that I'm experiencing. To be fair, when it happens it happens extremely infrequently, so my trying this five or six times perhaps isn't sufficient to expose the problem.

Question

I know for FTDI one can reduce the latency timer for devices sending small messages such that they don't get bunched up into a single packet. But I am connected to the teensy through /dev/ttyACM0, so I guess this isn't an option. Are there some options I can look into for checking the usb port more often, or some methods I can apply for reducing this latency? As the signal rate is 200 Hz, latency >1ms begins to be a little problematic.

Code:

#include <Arduino.h>

#include <TeensyTimerTool.h>

const uint8_t _pin_ = 1; // output pin

IntervalTimer g_trigger_timer;

TeensyTimerTool::OneShotTimer g_one_shot_timer;

elapsedMicros g_micros_since_second;

volatile uint32_t g_seconds;

void periodicCallback()

{

// get current time [seconds, micros since second]

// set trigger pin HIGH

digitalWriteFast(_pin_, HIGH);

// set one shot timer to high time (10us)

// g_one_shot_timer.trigger(10)

// pack information into message

// [seconds, micros, count]

// Send message (10 bytes)

// Serial.write(msg, 10);

// Serial.send_now();

}

elapsedMillis g_led_millis;

uint32_t g_led_interval = 1000;

void setup()

{

// setting pin modes

// setting PPM interrupt for rising edge with callback:

// void ppmInterrupt(){

// g_micros_since_second = 0;

// g_seconds++;

// }

setup_pins();

Serial.begin(9600);

while (!Serial) {

}

// connecting to RTC for PPM timekeeping signal

if (!connectToRTC()) {

Serial.println("RTC problems");

}

g_trigger_timer.begin(periodicCallback, 5000);

g_one_shot_timer.begin([] { digitalWriteFast(_pin_, LOW); });

g_led_millis = 0;

}

void loop()

{

if (g_led_millis > g_led_interval) {

digitalToggleFast(LED_BUILTIN);

g_led_millis -= g_led_interval;

}

}Now this is totally capable of generating the trigger square wave with low variance along with the corresponding timestamp

The problem

The problem is there is often delay in communicating this 10 byte message to the PC. I tried to do some basic logging of the sampling time of this message (with a C++ node and std::chrono::system_clock::now()) and found that, for two serparate runs:

Code:

serialRate: mean_ms=4.99, max_ms=11.99, min_ms=0.02, total_s=304.79

serialRate: mean_ms=5.00, max_ms=8.47, min_ms=2.11, total_s=562.42Note logging happened by way of

Code:

while(True){

if (serial_conn_->available()) {

if (first_iteration) {

first_iteration = false;

} else {

profiler_.stop("serialRate");

}

serial_conn_->read(buffer, expected_size_);

profiler_.start("serialRate");

}

}- serial_conn_ comes from https://github.com/wjwwood/serial

- buffer is std::vector<uint8_t>

- expected_size_ = 10

- profiler_.start/profiler_.stop makes called to std::chrono::system_clock::now() for timing

The output rate here is 200Hz, I believe there was a message which was delayed until arriving almost simultaneously with the following sampling time, and this is the reason for the max_ms being double the sampling rate and the min_ms being so small. As far as I understand, USB serial w/ teensy 4.1 isn't set by Serial.begin(9600); and is actually either 12 or 480 Mbit/sec, following USB speeds.

A 10 byte message at 12 Mbit/sec is should take 0.0067 ms = (10 × 8) ÷ (12*10^6) × 1000. So that is far and away sufficiently fast. I figure this means that the problem lies in the serial interface to my laptop, just to note I have limited experience with usb communication at a very hands-on level so please correct me if I say anything wrong.

Testing USB comm latency

I also employed a different test of the latency, I can't quite remember the source but I found this in another forum post:

Code:

#include <Arduino.h>

void setup()

{

Serial.begin(9600);

Serial.flush();

delay(1000);

}

const size_t buf_len = 10;

uint8_t buffer[buf_len];

void loop()

{

size_t buf_pos = 0;

for (size_t buf_pos = 0; buf_pos < buf_len; buf_pos++) {

while (Serial.available() <= 0)

;

int c = Serial.read();

buffer[buf_pos] = c;

if (c == 13 || c == 10) {

Serial.write(buffer, buf_pos + 1);

Serial.send_now();

break;

}

}

}

Code:

#!/usr/bin/env python3

import serial

import sys

import io

import time

message_count = 10

if len(sys.argv) != 2:

print("Usage: test-lat <Serial Port>")

raise SystemExit

port_name = sys.argv[1]

ser = serial.Serial(port_name, 9600, xonxoff=False, rtscts=False, dsrdtr=False)

ser.flushInput()

ser.flushOutput()

sio = io.TextIOWrapper(io.BufferedRWPair(ser, ser, 1), encoding="ascii", newline="\n")

sio._CHUNK_SIZE = 1

max_time = 0

total_time = 0

for i in range(message_count):

start_time = time.perf_counter()

out_msg = str(i) + "\n"

sio.write(out_msg)

sio.flush()

in_msg = sio.readline()

if out_msg != in_msg:

print("Error: invalid response: ", in_msg)

end_time = time.perf_counter()

elapsed = end_time - start_time

if elapsed > max_time:

max_time = elapsed

total_time += elapsed

print(f"Message count: {message_count}")

print(f"Average time: {total_time / message_count * 1e3} ms")

print(f"Max time: {max_time*1e3} ms")

ser.close()

Code:

❯ python3 test_serial_latency.py /dev/ttyACM0

Message count: 10

Average time: 0.15015669050626457 ms

Max time: 0.22571900626644492 ms

❯ python3 test_serial_latency.py /dev/ttyACM0

Message count: 10

Average time: 0.11744970106519759 ms

Max time: 0.17464201664552093 ms

❯ python3 test_serial_latency.py /dev/ttyACM0

Message count: 10

Average time: 0.1389778859447688 ms

Max time: 0.2486809971742332 ms

❯ python3 test_serial_latency.py /dev/ttyACM0

Message count: 10

Average time: 0.1252930029295385 ms

Max time: 0.17271301476284862 ms

❯ python3 test_serial_latency.py /dev/ttyACM0

Message count: 10

Average time: 0.16377330175600946 ms

Max time: 0.2868599840439856 msQuestion

I know for FTDI one can reduce the latency timer for devices sending small messages such that they don't get bunched up into a single packet. But I am connected to the teensy through /dev/ttyACM0, so I guess this isn't an option. Are there some options I can look into for checking the usb port more often, or some methods I can apply for reducing this latency? As the signal rate is 200 Hz, latency >1ms begins to be a little problematic.