First things first

The serial problem at the moment is on the PC side. Sometimes, old data is not removed from the receive buffer even though it's been read. Even the purge command does not clear it. The libraries for USB_HID do not exist but use a wrapper for the C++ version. That attempt was even worse. I need to investigate the serial problem first before proceeding.

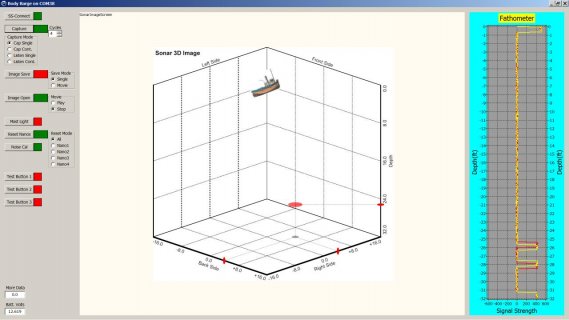

The handshaking scheme is necessary due to the nature of the data source. The sonar data is accumulated at the shore station by nRF24 from the boat then sends a six byte message to the PC that the data is ready. Thereafter, the PC requests a particular packet from one of the four sonars and expects 128 bytes to be returned. This continues until all data has been recovered. In general, neither side knows when something will happen until informed by the other side.

I've been trying to find an IDE/RAD software package that has the features of Lazarus(visual Pascal) but using C++. I just installed a trial version of C++ Builder by Embarcadero. It's so bloated with just a portion installed that I'm going to uninstall it without even trying it. I also have Ultimate++ and Code::Blocks to try but don't know if it's an IDE/RAD type of package. Most of these packages are very skimpy on the component menus and object inspector details. Lazarus has everything up front along with an Anchor Editor which I dearly love.

I'll keep trying until I get something to work. Thank you Paul for a great product and support.

Ed

Obviously everything Paul is saying is correct. Having recently gone through a similar process that was long and frustrating but ultimately successful I will attempt to add what I can in the way of practical suggestions.

First of all, we need to know what data throughput and what latency your project really requires. From the description you give it does not appear that latency can possibly be an issue

... so I'll focus on throughput. You say you "receive 128 byte data packets at 2.25 Mbps" -- does that mean you have an average amount of 2.25 Mbits of sonar data that must be transferred each second?

Is 128 bytes a complete sonar ping response for a single handshake, or do you receive two thousand 128 byte packets in response to a single handshake?

Does the data need to be received in a certain amount of time, or is it just that you need to keep up with the volume of data so things don't pile up on the sending side?

= = = = =

Throughput will be determined by a combination of the actual data transfer time (related to the interface speed) and the time spent waiting for transfers to occur. Each handshake will consume a lot of coordination/waiting time that will waste transfer time, so the more handshaking you do per data aliquot, the lower your total throughput. By a lot.

If you control the code at both ends you can maximize your throughput headroom if you do as Paul has suggested: make the data source simply send data as soon as it exists. Put a header on the data (or put multiple frame headers on chunks of data) and simply begin sending it as soon as you can. Make the PC listen for data all the time, read the header (or the frame headers) and route the data accordingly (analysis, write to disk, etc.). I would probably define a virtual "channel number" for each sonar data source, so I could add additional sources easily, but there are many other ways to approach that part of the problem.

If you *do* control both sides, I would *definitely* discard the concept of handshaking right off the bat, because it really adds nothing. Just set a timer interrupt ISR to listen on the PC side and keep an inbound queue filled with data.

Virtual serial port transfer over USB is very good at getting the data to you in the right order -- it handles handshaking and retransmission etc. invisibly and quite reliably. When I first read this I was skeptical myself, but it's actually true. I move large amounts of streaming medical data, and although I did put a lot of safety code in place I have never observed any data loss in transit using virtual serial over USB. If you want to be absolutely certain, you will want to include sequence numbering in your outbound frames and make provision to request a retransmission of any data frame that is not received on the PC side.

In my case the limiting factor was that if the host PC did not read data fast enough, I would run out of buffer space on the sending device and would be forced to discard data. I used a circular buffer so this happened automatically, but I did monitor it and I put a "0x00, 0xFF" byte pair on the outbound feed every time there was data loss -- that allowed me to see data loss easily in my displays without requiring me to send any ascii error messages (additional serial print calls change the timing of the program). At first I followed the published examples (MSDN and everywhere else) and they were either way too slow or appeared to lose data.

Way too slow:

Code:

while (port.bytes_available > 0)

var blah = port.Read(byte);

// do something with blah

or this approach, which loses data because port.BytesToRead does not count ALL the bytes that are available to read.

Code:

int count = port.BytesToRead;

byte[] ByteArray = new byte[count];

port.Read(ByteArray, 0, count);

The approach that suddenly gave me all my data and the promised transfer speeds was this (this is actual working code from a C# app doing well over 1Mbps sustained.) The async part of it is not necessary -- the magic is in knowing that IFF the timeout is zero and you request a big block of data, you will get back everything that exists and a count of bytes that were put into your buffer. Then when you know how much you got, just copy it into a properly sized buffer and use it as needed.

Code:

protected async Task<byte[]> ReadSerialBytes()

{

byte[] buffer = new byte[4096];

try

{

int actualLength = await serialPort1.BaseStream.ReadAsync(buffer, 0, buffer.Length);

byte[] received = new byte[actualLength];

Buffer.BlockCopy(buffer, 0, received, 0, actualLength);

return received;

}

catch (IOException ex)

{

Console.Beep();

statusMessage1("Error: " + ex.ToString());

return null;

}

}

that code example is in C#, but the correct approach is not just a .net thing, because I experienced exactly the same thing when using Python and I have seen linux discussions that were substantially the same.

= = = = =

If you do not control the code at the source end and you are absolutely locked into multi-step handshaking, you may still be able to accomplish your throughput needs. The USB hardware will move something like 64 bytes every millisecond, but the PC operating system will not communicate with the USB hardware anywhere nearly that often. When your PC application requests that your operating system send or receive a single data chunk (regardless of the size of the chunk) your non-realtime operating system typically will wait 20 msec - 500 msec before it gets around to actually executing your read data or write data request. If you are running incorrectly configured backup or antivirus software (or your machine has phantom drives or any of a host of other problems) you may occasionally encounter periods of several seconds during which USB and serial port calls are suspended. If that kind of thing will sink you, you may need to add really big buffers running in a RTOS somewhere before the PC

So when you *do* get to read from the port, you *really* don't want to read or write a single byte at a time, even though MANY of the code examples show it this way.

In my experience a round-trip handshake can take up to 1 second, during which time you are not receiving any data. However, once the data does start to flow, it comes very quickly -- provided you read ALL received bytes on each read, rather than trying to read a single byte at a time.

So the bottom line is that if latency is not the problem, you may lose a second for each data handshake but you may still be able to receive the data fast enough to keep up with the sources. You can easily test this, of course.

Finally, if the throughput and latency you need can be met with virtual serial over USB or with a serial FTDI interface, I recommend you pursue serial transfers as opposed to native USB transfers, This is for one simple reason: I found relatively little in the way of guidance, tutorials, and project-oriented documentation for USB data transfers, whereas there are nearly 30 years of documentation available related to serial transfers. The bugs and gotchas in the Windows and Linux serial implementations are well understood and well documented, once you find the right sources. For USB transfers I found plenty of material aimed at comm engineers, but very little on the host operating system APIs.

Oh, yes -- some additional practical pointers:

Don't add sleep statements. They really won't help with this. The hardware really does work and it is much faster than your code or the OS, and the problems are almost certainly going to turn out to be in your own code and the way you're approaching the transfer.

Barring a bad USB cable, if the teensy successfully puts data on the virtual serial port (or an FTDI port), that data WILL arrive at the host PC and it WILL be readable from the serial port. The problem is NOT happening between the underlying USB transport and the virtual serial port, so moving to native USB will not make anything fundamentally better.

If you read from a virtual serial port CORRECTLY, it will NOT fail to give you data it has received and it will NOT give you the same data twice. It also will not discard data that it has received. The only thing that will happen is that if you fail to read from the port fast enough you may not keep up with inbound data, in which case the port will fill up and will fail to request data from the sender, and the sender may overflow and discard data.

If you read from the serial port INCORRECTLY, you may appear to lose data. It's easy to be misled into reading incorrectly, and many of the published code examples out there have got it all wrong. You cannot trust the data_available interrupt, because it does not fire based on what you think would be triggering it. You also cannot trust the bytes_available concept because it does not reflect ALL the bytes that have actually been received and are available to be read.

If you read a virtual serial port, set the read timeout to zero (before opening the port). Then you have two choices. You can keep checking to see if there are *any* reported bytes available. If there are, the count of available bytes is not reliable so just pass a big buffer and request all bytes -- you'll get a return value telling how many you got. Alternatively just keep trying to read all bytes from the port, and check to see if you got any. The serial port likely will return data in chunks: when I'm streaming data, my OS serial port implementation returns 4096 bytes whenever it shows any bytes available, so 4096 is the useful buffer size in my case.

If you build a test sketch that just sends sequential bytes from the teensy and a test PC application that just reads bytes from the virtual serial port in the manner I've described (without handshaking) you should be able to achieve throughput above 1 mbps. If you *DON'T* achieve that throughput, you haven't got it right yet and you should post your code and other details of your attempts.

After you've got that working, THEN if you really need to, you can add handshaking and work to minimize the loss of throughput.

Not sure any of this helps in any way, but I thought I'd at least try to point you in the right direction...

glhf